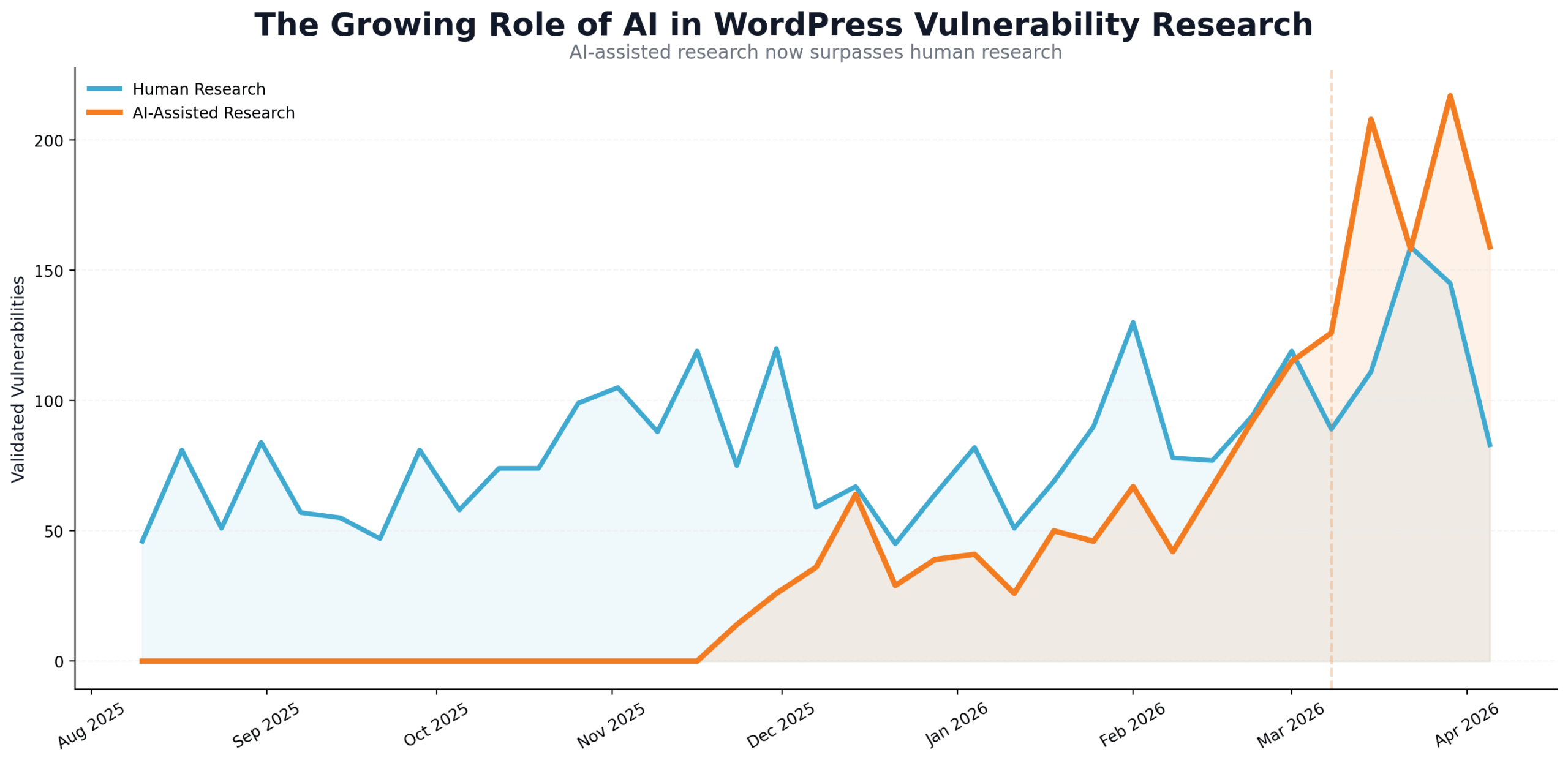

At Wordfence, we run a bug bounty program that pays out mid-six figures per year to researchers in bug bounties for WordPress related vulnerabilities. Funding this research helps us improve security for the WordPress community overall, and helps us secure our customers by rolling out protection for new vulnerabilities as they’re discovered. We started tracking what vulnerability submissions used AI in some form, starting in late November 2025. These are self-reported stats from researchers.

From the first week of full tracking data we have gone from 16% of all vulnerabilities reported leveraging some form of AI during the research, to now approximately 66% of reports saying that the researcher used some form of AI to assist in the vulnerability research.

In March/April 2026 we’re seeing AI-assisted vulnerability research overtake vulnerability reports that do not report using AI assistance. There’s no difference in compensation to researchers for AI-assisted vs non-assisted research, so there’s no incentive to misreport.

In addition, we started seeing an increase in submissions in October of 2025, and since then our volume has increased by 453%. This has created an internal need to scale up our vulnerability triage process, and our human threat intelligence team now uses a multi-agentic pipeline to speed up our ability to triage incoming vulnerabilities beyond the more than 4X growth that we’ve seen in reports.

Will AI Replace Human Researchers?

We do not see a near-term future where AI replaces human researchers. Internally we are creating cognitive architectures that use combinations of OpenAI, Anthropic, and Google’s AI products to produce impressive results at vulnerability hunting, triage, and data processing. There are exciting opportunities for human researchers in vulnerability research and in cybersecurity as a whole.

But it’s easy to make hand-wavy claims, so I’d like to give you a few examples of areas that we’re watching and technologies that we’re experimenting with:

- Distillation of larger models into smaller, more capable models, like the recent Gemma 4 release, allow for faster, cheaper, more scalable models that can enable vulnerability research at scale.

- Semantic routing – in other words, using vector embeddings to represent the semantic meaning of a prompt and then routing based on that meaning or level of complexity – is a powerful enabler for multi-LLM architectures that can drive down costs and increase cognitive performance and throughput.

- Fine-tuning, in its various forms, using vulnerability hunting agentic trajectories, we think is a fruitful line of research.

- Security-specific cognitive architectures that rely on “experts” in a range of fields, including for certain kinds of vulnerabilities, have yielded impressive results internally for us. Combining this with a LoRA fine-tune using agentic trajectories to create expert models, which can be added to a cognitive architecture, is something we’re actively experimenting with.

There are many more areas where security researchers, with their broad and deep threat and architectural knowledge, can combine their skills with powerful agentic AI capability to make profound breakthroughs in security research.

It’s worth noting that many of the breakthroughs in LLM performance over the past 2 years have come from advancements in test-time compute. What this means is that AI researchers have used techniques like chain of thought, planning, state documents and more, to produce better results from models. These breakthroughs are finding new ways for LLMs to think, rather than focusing on expensive foundational training.

These advancements in test-time compute signal that it is possible for security researchers to invent creative cognitive architectures, and new ways to interact with models, to move the entire industry forward, rather than needing to raise several billion dollars to train a new security-focused foundational model. Research breakthroughs in cybersecurity and AI are no longer confined to the walls of mega-corporations and research institutions. It’s quite possible that an OpenClaw for security will emerge from the open-source community, and this is a very exciting prospect!

Is the “End of Vulnerabilities” near?

Will an LLM, or set of LLMs be created that is so good that developers and agents will no longer write vulnerabilities? We think it’s worth considering that a developer and QA team would need to fix all security holes in their software, while an attacker only needs to find one vulnerability to compromise a system.

This idea is the classic cybersecurity “Defenders Dilemma” where an attacker only needs to be right once, while a defender needs to successfully defend every attack. As defenders, we are at a profound disadvantage, and this is one of the reasons why we take a layered approach to security.

In a world where AI is exclusively available to defenders, one might imagine that exploitable vulnerabilities almost disappear. But as the good-guys gain powerful agentic AI, so do the threat actors. As programming and testing becomes progressively more agentic, so will attacks, exploit kits, botnets and other offensive tools.

Anthropic and Google wrote detailed posts in February of this year about distillation attacks. A distillation attack allows an adversary to have thousands of agentic conversations with a high-powered foundational model like Opus 4.6 or Gemini 3 or GPT 5.4, and to store the entire transcript of those conversations, including tool calls and chain of thought, and then use those transcripts to train a new model at a fraction of the cost of training a foundational model. That new model may have all safeguards removed.

We may see adversarial models emerge that have distilled much of the capability from the very best frontier models, and which enable agentic attacks at scale.

Our current view is that vulnerabilities will continue to exist, but may increase in their sophistication and complexity. Entire new classes of vulnerabilities may be discovered, and some of these will be discovered by defenders, and some may be discovered by attackers. We also expect to see a continually shifting environment where, at some stages during the evolution of AI, attackers will have the advantage, and at others, defenders will have the advantage. As commercial providers make breakthroughs, and there are major innovations coming out of the open-source and local-model community, those advantages will shift back and forth.

The smartest developers in the world sometimes write bugs, and a vulnerability is just a celebrity bug. It’s hard to imagine the smartest models in the world will be infallible. If we are to see the end of vulnerabilities, we would also need to see the end of bugs.

The Future of WordPress Security

At Wordfence we expect to continue to work with human researchers, assisted increasingly by AI. We don’t expect an end to vulnerabilities any time soon, and we do expect their complexity and sophistication to increase. We also expect new kinds of malware to emerge, with their development assisted by jailbroken open-weights models.

These kinds of emerging threats will present unique challenges to our team, and the community of online researchers, and we expect to leverage the most powerful tools available to solve these problems.

While it’s tempting to envision a world with bespoke on-demand publishing platforms created by agents, we think that reinventing authentication, authorization, role-based access, and all the other elements that WordPress has already solved is a risky prospect. WordPress is battle-hardened and supported by a huge community of developers, designers, security researchers, and security organizations, and using agentic capability to extend the solid foundation that WordPress provides is the more exciting, productive, and secure path forward.

Wordfence will continue to invest in WordPress security research and WordPress security researchers. We see a bright future for the platform and an incredibly exciting landscape for security researchers.

Thanks for choosing Wordfence, and we look forward to continuing to secure your WordPress investment.

Mark Maunder – Wordfence Founder and CTO.

The post The Increasing Role of AI in Vulnerability Research appeared first on Wordfence.